Probability is the most language-sensitive topic in IB Mathematics Analysis & Approaches HL. Almost every mark hinges on whether a student correctly translates words like "given that", "at least", "and", or "or" into the right symbols. The traps are notorious: confusing $P(A \cap B)$ with $P(A \mid B)$, treating mutually exclusive as the same as independent, or forgetting that without-replacement problems force the denominator to shrink. At HL there is one further weapon — Bayes' theorem (AHL 4.13) — which often appears disguised as a medical-test or quality-control problem.

This cheatsheet condenses every formula, definition, and exam strategy from SL 4.5–4.6 and AHL 4.13 into one revisable page. It walks through Venn diagrams, the addition rule, conditional probability, independence vs mutual exclusivity, two- and three-stage tree diagrams, repeated-game geometric series, and the four-step Bayes method. Scroll down for the printable PDF and the gated full library.

§1 — Core Definitions & Venn Basics SL 4.5–4.6

Core probability axioms

Addition rule

$$P(A \cup B) = P(A) + P(B) - P(A \cap B)$$

De Morgan: $P(A' \cap B') = 1 - P(A \cup B)$.

The four Venn regions sum to 1:

$$P(A \cap B') + P(A \cap B) + P(A' \cap B) + P(A' \cap B') = 1$$

Useful identity: $P(A) = P(A \cap B) + P(A \cap B')$.

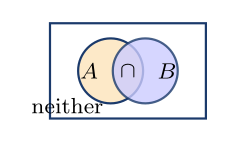

2-set Venn diagram fill order

- Fill $A \cap B$ (the centre) first.

- Then $A$-only $= P(A) - P(A \cap B)$.

- Then $B$-only $= P(B) - P(A \cap B)$.

- Then "neither" $= 1 - $ everything else.

3-set Venn — inside-out rule

- Fill $A \cap B \cap C$ (the centre).

- Each pairwise-only region $=$ pair $-$ centre.

- Each circle-only region $=$ circle $-$ all overlaps inside it.

- Neither $= 1 - $ everything filled.

§2 — Mutually Exclusive & Conditional Probability SL 4.5–4.6

Mutually exclusive (ME)

$P(A \cap B) = 0$ — the two events cannot both occur. The addition rule simplifies to $P(A \cup B) = P(A) + P(B)$.

Test: is $P(A \cap B) = 0$? Key fact: if $P(A), P(B) > 0$, then ME implies not independent.

Conditional probability

$$P(A \mid B) = \dfrac{P(A \cap B)}{P(B)}$$

Rearranged (this is the rule when multiplying along a tree branch):

$$P(A \cap B) = P(B) \cdot P(A \mid B) = P(A) \cdot P(B \mid A)$$

Intuition: restrict the universe to $B$, then ask how much of $B$ is also in $A$.

Finding $P(B)$ algebraically

Given $P(A \mid B)$, $P(A)$, and $P(A \cup B)$:

- $P(A \cap B) = P(B) \cdot P(A \mid B)$

- Substitute into the addition rule: $P(A \cup B) = P(A) + P(B) - P(B) \cdot P(A \mid B)$

- Solve for $P(B)$.

§3 — Independent Events SL 4.6

Three equivalent definitions

$A$ and $B$ are independent iff any one of these holds:

$$P(A \cap B) = P(A) \cdot P(B), \quad P(A \mid B) = P(A), \quad P(A \mid B') = P(A)$$

Test steps (always state your conclusion in words!):

- Find $P(A \cap B)$.

- Compute $P(A) \cdot P(B)$.

- Equal? $\Rightarrow$ independent.

- State: "Since $P(A \cap B) = P(A) \cdot P(B)$, the events are independent."

Independence with algebra

If independent and given $P(A \cup B)$:

$$P(A \cup B) = P(A) + P(B) - P(A) \cdot P(B)$$

Let $a = P(A)$, $b = P(B)$ and solve the system. IB-style trick: given $P(A \cap B')$ and $P(A \cup B)$ assuming independence, subtract: $P(B) = P(A \cup B) - P(A \cap B')$.

Mutually exclusive vs independent

| Mutually exclusive | Independent |

|---|---|

| $P(A \cap B) = 0$ | $P(A \cap B) = P(A) P(B)$ |

| Cannot both occur | Knowing $A$ doesn't affect $B$ |

| $P(A \mid B) = 0$ | $P(A \mid B) = P(A)$ |

If $P(A), P(B) > 0$, the events cannot be both ME and independent.

§4 — Tree Diagrams SL 4.5–4.6

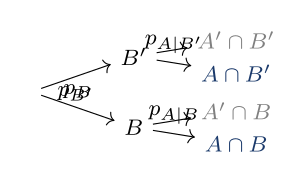

Tree diagram rules

- Along a branch: multiply.

- Between separate paths: add.

- At each node: the branches must sum to 1.

- Path probability: $P(B) \cdot P(A \mid B) = P(A \cap B)$.

- Total probability law: $P(A) = \displaystyle\sum_i P(B_i) \cdot P(A \mid B_i)$.

- With/without replacement: without replacement, the fractions change at each stage.

Repeated game / geometric series

If a player wins on turns $1, 3, 5, \ldots$ (with replacement), the probability they eventually win is:

$$P(\text{win}) = \sum_{k=0}^{\infty} (1-p)^{2k} \cdot p = \dfrac{p}{1 - (1-p)^2}$$

General: $S_\infty = \dfrac{a}{1 - r}$ for $|r| < 1$. Typical IB question: Player A starts; find the probability A wins eventually.

§5 — Bayes' Theorem AHL 4.13

Formula (in the booklet)

$$P(A_j \mid B) = \dfrac{P(A_j) \cdot P(B \mid A_j)}{\displaystyle\sum_i P(A_i) \cdot P(B \mid A_i)}$$

Tree-diagram version:

$$P(A_j \mid B) = \dfrac{\text{desired path to } B}{\text{ALL paths to } B}$$

4-step Bayes method

- Draw the tree (causes first, observed result second).

- Compute each path to $B$: $P(A_i) \cdot P(B \mid A_i)$.

- Sum ALL paths to $B$ to get $P(B)$.

- $P(A_j \mid B) = $ desired path $\div$ total.

3-cause version (medical test pattern)

Causes $A_1, A_2, A_3$, observed $B$:

$$P(B) = P(A_1) P(B \mid A_1) + P(A_2) P(B \mid A_2) + P(A_3) P(B \mid A_3)$$

- Has disease: $P(+ \mid \text{disease}) = $ sensitivity.

- No disease: $P(+ \mid \text{no disease}) = $ false positive rate.

- Question: find $P(\text{disease} \mid +)$.

Quadratic probability problems

Trees often produce a product of complements, e.g. $(1 - k)(1 - \tfrac{k}{2}) = c$. Expand to get a quadratic in $k$. Always check both roots and keep only those satisfying $0 \le k \le 1$.

IB 2024 May P1 example: $(1 - k)(1 - \tfrac{k}{2}) = \tfrac{5}{9} \Rightarrow 9k^2 - 27k + 8 = 0$, roots $\tfrac{1}{3}$ or $\tfrac{8}{3}$. Reject $\tfrac{8}{3} > 1$. Answer: $k = \tfrac{1}{3}$.

§6 — Exam Attack Plan & Traps All sections

Attack plan — when you see, do this

| See... | Do... |

|---|---|

| $P(A \cup B)$ given, find $P(A \cap B)$ | Addition rule, rearranged |

| Find $P(A' \mid B')$ | $\dfrac{1 - P(A \cup B)}{P(B')}$ |

| "Given that..." | Conditional formula or tree branch |

| "Show $A$ and $B$ are independent" | Check $P(A \cap B) = P(A) P(B)$, state conclusion |

| "Given late / +ve, find cause" | Bayes via tree |

| Without replacement | Change fractions at each stage |

| "Turns until first win" | Geometric series $\dfrac{a}{1 - r}$ |

| Quadratic from tree | Check $0 \le p \le 1$ for both roots |

| 3-set Venn numbers | Inside-out: centre, pairs, singles |

Top exam traps

- Add along paths, multiply across — wrong! It's the reverse: multiply along a branch, add between paths.

- Forgetting the $-P(A \cap B)$ term in the addition rule.

- $P(A \mid B) \neq P(B \mid A)$ — swapping these kills marks.

- ME $\neq$ independent — state this clearly when relevant.

- Not stating the conclusion: "therefore $A$ and $B$ are independent" earns the R1.

- Quadratic: are both roots valid? Always check $0 \le p \le 1$.

- Without replacement: denominators decrease.

- Bayes: the denominator must be the total $P(B)$, not 1.

Key formula reference

| $P(A')$ | $= 1 - P(A)$ |

| $P(A \cup B)$ | $= P(A) + P(B) - P(A \cap B)$ |

| $P(A' \cap B')$ | $= 1 - P(A \cup B)$ |

| $P(A \mid B)$ | $= \dfrac{P(A \cap B)}{P(B)}$ |

| Independence | $P(A \cap B) = P(A) P(B)$ |

| ME | $P(A \cap B) = 0$ |

| Total probability | $P(B) = \displaystyle\sum_i P(A_i) P(B \mid A_i)$ |

| Geometric series | $\dfrac{a}{1 - r}, \; |r| < 1$ |

IB mark-scheme notes

- M1 — method correct (e.g. set up Bayes).

- A1 — answer correct (final value).

- (A1) — intermediate correct answer.

- R1 — reasoning / conclusion required.

- AG — answer given (every step must be shown).

For $P(A \mid B)$ questions, always show $P(A \cap B)$ and $P(B)$ separately before dividing. For independence questions, you must state: "since $P(A \cap B) = P(A) \cdot P(B)$, they are independent."

Worked Example — IB-Style Bayes' Theorem

Question (HL Paper 2 style — 7 marks)

A factory has three machines $M_1$, $M_2$, $M_3$ that produce 30%, 50%, and 20% of the daily output respectively. Their defect rates are 2%, 4%, and 5%. A randomly selected item is found to be defective.

(a) Find the probability the item came from machine $M_2$. (b) Comment on whether knowing the item is defective makes $M_2$ more likely to be the source than the prior 50%.

Solution

- Let $D$ be "defective". Compute each path to $D$: $P(M_1)P(D \mid M_1) = 0.30 \times 0.02 = 0.006$; $P(M_2)P(D \mid M_2) = 0.50 \times 0.04 = 0.020$; $P(M_3)P(D \mid M_3) = 0.20 \times 0.05 = 0.010$. (M1)(A1)

- Total $P(D) = 0.006 + 0.020 + 0.010 = 0.036$. (A1)

- By Bayes: $P(M_2 \mid D) = \dfrac{P(M_2) P(D \mid M_2)}{P(D)} = \dfrac{0.020}{0.036}$. (M1)

- $P(M_2 \mid D) = \dfrac{20}{36} = \dfrac{5}{9} \approx 0.556$. (A1)

- Compare to prior: the prior $P(M_2) = 0.50$. The posterior $\approx 0.556 > 0.50$. (R1)

- Conclusion: knowing the item is defective increases the probability it came from $M_2$, because $M_2$'s defect rate (4%) is above the overall rate ($\approx 3.6\%$). (R1)

Examiner's note: The most common slip is computing $P(D \mid M_2) = 0.04$ and stopping. That answers a different question. Always remember the denominator in Bayes is the total probability $P(D)$, summed over every cause — not 1, and not the prior $P(M_2)$.

Common Student Questions

What is the difference between mutually exclusive and independent events?

Why do I keep getting Bayes' theorem questions wrong?

When do I add probabilities and when do I multiply?

How do I solve probability problems that produce a quadratic in $p$?

Without replacement — what changes between draws?

Get the printable PDF version

Same cheatsheet, formatted for A4 print — keep it next to your study desk. Free for signed-in users.